Reconfigurable Computing for the Masses, Really?

New opportunities and long-standing challenges of a disruptive technology.

A workshop at and after FPL'15 to engage the research community to identify and understand the issues that must be addressed to enable the use of reconfigurable arrays by mainstream software programmers.

4th September 2015

Overview

Reconfigurable computing, or the use of fabrics such as FPGAs to accelerate computing tasks normally run on conventional general-purpose processors, has been around almost as long as FPGAs themselves. Yet, very few FPGAs populate data centres, even less are on acceleration boards in our PCs, and none are in our laptops and tablets. We are now witnessing a new and exciting inflection point in the use of FPGAs: they are now being viewed as potentially viable commercial off-the-shelf components directly in the path of software developers. Microsoft recently revealed Catapult, a server with FPGAs soon to be in use in large data centres to accelerate their Bing search engine. Intel also announced a new compute node that will integrate an FPGA with a large Xeon multicore processor. These types of announcements show industrial commitment to accelerate the transition of FPGAs into new application domains dominated by software programmers.

Enabling software developers to apply their skills over FPGAs has been a long and, as of yet, unreached research objective in reconfigurable computing. In the past our inability to reach this goal did not greatly impact the adoption of FPGAs within the traditional markets of embedded and networking systems. The manufactures of these systems employed sufficient numbers of hardware and system design engineers with skill sets in low level programming and custom hardware design. The additional engineering effort and extended time to market required to tune an FPGA to gain peak performance was an acceptable system development cost. This is clearly not an option when FPGAs are to be integrated in conventional computing equipment where short time to market and repeated updates to software are core to these companies' business models.

But obstacles to widespread FPGA adoption go well beyond the required skill set. Another major obstacle is the total lack of standardization. To start a serious programming project, one can simply use any commodity laptop as a first platform, install any of several available operating systems including several perfectly solid free options, use some typically fairly robust programming environment in a language of choice, and leverage amazing amounts of free middleware. A moderately experienced software programmer can be evaluating and testing basic ideas literally within hours from project start. Contrast this with someone interested to accelerate a computing job with FPGAs: devices across vendors are fundamentally different and incompatible; RTL programs, albeit in principle portable, are definitely not; development software is completely vendor specific, with very significant differences in feature sets and often with a level of robustness well below common software standards; boards, even with identical FPGA chips, are totally incompatible and getting even the simplest host-to-FPGA communication functionality may take weeks to skilled designers; both existing free hardware components or system software packages are seldom truly robust and most likely not usable without significant investment, usually because written for a different device and/or a different board. In practice, it may take from weeks to months before meaningful experimentation might start, even for experimented designers. Using GPUs as accelerators has some aspects in common with using FPGAs but the overall experience has been made to resemble much more that of a software job. Will this ever happen for FPGAs?

Computing applications present a unique opportunity: Within embedded and real time systems a cheaper solution that could not meet timing requirements was simply not acceptable, somehow limiting development to designers able to extract the last drop of efficiency. These newly emerging computing domains are happy with solutions that optimize for economics as long as the performance is good enough. These types of economics versus performance trade-offs are commonplace under software development environments and compilation, but not using hardware design flows and synthesis. How can such an opportunity be seized if the fundamentally limiting factors of required skill sets and of solidity of the programming ecosystem are not solved?

Programme

The programme composes three sessions of invited presentations: one in the morning as a closing special session of FPL in the main theatre of the Royal Institution and two in the afternoon at the Imperial College London. We will then conclude with a session including working groups to stimulate the audience and a panel.

| Royal Institution (4th September 2015, am) |

|

|---|---|

| 10:40 |

Opening Remarks |

| 10:50 |

Session I: Speaking for programmers |

| Jim Larus (École Polytechnique Fédérale de Lausanne, CH) | |

| Kunle Olukotun (Pervasive Parallelism Lab, Stanford University) | |

| 12:15 |

Lunch |

| Imperial College (4th September 2015, pm) | |

| 1:30 | Session II: New opportunities |

| Andrew Putnam (Microsoft Research) | |

| P. K. Gupta (Intel) | |

| 3:00 |

Coffee Break |

| 3:30 |

Session III: Software Environments |

| Marco Platzner (University of Paderborn) | |

| Michaela Blott (Xilinx Labs) | |

| Paul Chow (University of Toronto) | |

| 5:00 |

Interactive Session |

|

Reconfigurable Computing for the Masses: Now, Later, or... Never?

Moderator: Walid NajjarParticipants: Michaela Blott, Paul Chow, P. K. Gupta, Jim Larus, Kunle Olukotun, Marco Platzner, Andrew Putnam |

|

| 6:00 |

Closing Reception (offered by EcoCloud) |

About the Talks and the Speakers

Jim Larus

EPFL

Catapult the Masses

(slides)

Microsoft Catapult was an ambitious and successful

reconfigurable computing project that accelerated the

Bing search engine’s core ranking algorithm. It was

built by a talented team of computer architects and

hardware designers, which is not a scalable or

reproducible model for most cloud computing

projects. Did it have to be so hard? The answer is

both yes and no. The systems engineering, which is

fortunately reusable across projects, required

considerable hardware (and software) expertise. But,

the search-specific aspects embody a design pattern of

targeted, massively parallel custom processors and

domain-specific languages that, with better tools,

could be widely used by software developers to take

advantage of reconfigurable hardware. This talk will

briefly sketch the approach and discuss some of the

changes necessary to the systems stack,

domain-specific languages, compilers, and FPGA tools.

James Larus is

Professor and Dean of the School of Computer and

Communication Sciences (IC) at EPFL (École

Polytechnique Fédérale de Lausanne). Prior to that

position, Larus was a researcher, manager, and

director in Microsoft Research for over 16 years and

an assistant and associate professor in the Computer

Sciences Department at the University of Wisconsin,

Madison. Larus has been an active contributor to the

programming languages, compiler, software engineering,

and computer architecture communities.

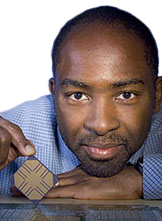

Kunle Olukotun

Stanford

Big Data Analytics in the Age

of Accelerators

(slides)

Achieving high performance in a modern computing

environment requires programs to run efficiently on

heterogeneous hardware platforms composed of

multicores, GPUs, clusters, and recently

FPGAs. However, programming for this environment is

extremely challenging due to the need to use multiple

low-level programming models and then combine them

together in ad-hoc ways. To optimize applications both

for modern hardware and for modern programmers we need

a programming model that is sufficiently expressive to

easily support a variety of applications and

sufficiently portable to execute efficiently on

heterogeneous parallel hardware. Nested parallel

patterns wrapped in domain specific languages (DSLs)

is a high-level programming model that has been shown

to be capable of targeting architectures as diverse as

multicore/NUMA, clusters, and GPUs. In this talk, I

will describe the Delite DSL framework and how it can

be used to extend the reach of nested parallel

patterns to FPGAs.

Kunle Olukotun is the

Cadence Design Systems Professor in the School of

Engineering and Professor of Electrical Engineering

and Computer Science at Stanford University. Olukotun

is well known as a pioneer in multicore processor

design and the leader of the Stanford Hydra chip

mutlipocessor (CMP) research project. Olukotun founded

Afara Websystems to develop high-throughput, low-power

multicore processors for server systems. The Afara

multicore processor, called Niagara, was acquired by

Sun Microsystems. Niagara derived processors now power

all Oracle SPARC-based servers. Olukotun currently

directs the Stanford Pervasive Parallelism Lab (PPL),

which seeks to proliferate the use of heterogeneous

parallelism in all application areas using Domain

Specific Languages (DSLs). Olukotun is an ACM Fellow

and IEEE Fellow.

Andrew Putnam

Microsoft Research

Accelerating Large-Scale

Datacenter Services

(slides)

For years FPGAs have shown huge potential to

accelerate large-scale computing workloads. Yet

despite the promise, FPGAs had not yet seen widespread

adoption in datacenters, where their performance and

energy-efficiency should be extremely attractive.

This talk will describe some of the challenges that

had held FPGAs back from adoption in the datacenter,

and then continue to describe Catapult, a

reconfigurable fabric that meets the strict

requirements of modern datacenters, providing high

energy-efficiency in the face of the slowing rate of

CPU performance improvements. Catapult is now in

production in some Microsoft datacenters, with

numerous applications demonstrated at various scales,

including Bing ranking, machine learning using

Convolutional Neural Networks (CNNs), compression, and

software defined networks. However, while getting

FPGAs into the datacenter is a critical first step,

there are still major challenges to making it viable

in the long term. This talk will also address these

challenges, such as making the programming environment

accessible to software development teams, ensuring

that datacenter operators can maintain and debug

existing platforms at scale, and enabling datacenter

architects to continue to push future technology

improvements into the datacenter without abandoning

existing software stacks.

Andrew Putnam is a

Principal Research Hardware Development Engineer in

the Microsoft Research New Experiences and

Technologies (NExT) division. He joined Microsoft

Research in 2009 after receiving his Ph.D. in Computer

Science & Engineering from the University of

Washington. His research focuses on accelerating data

center applications with novel hardware such as FPGAs,

and on the design of energy-efficient computer

architectures. He was the first FPGA engineer on the

Catapult project at Microsoft, which became the first

to introduce FPGAs into a production datacenter.

P. K. Gupta

Intel

Intel® Xeon® + FPGA

Platform for the Data Center

(slides)

This talk discusses the use of Intel® Xeon®

platforms with tightly coupled FPGA accelerators to

deliver better performance efficiency for targeted

workloads. The talk will describe the features and

benefits of the platform and discuss the hardware and

software programming interfaces that will be forward

compatible with future generations of the platform. We

will also discuss our vision of deploying this

platform in the Cloud managed as part of the SDI pools

of resources by OpenStack orchestration layer for

accelerating Big Data, High Performance Computing and

Cloud workloads.

P. K. Gupta (PK) is

the Director of Cloud Platform Technology in the Data

Center Group / Cloud Platform Group at Intel

Corporation. He is responsible for developing platform

technologies for accelerating cloud workloads,

including the Xeon-FPGA acceleration platform. Prior

to that, he was the CTO of Intel's Modular

Communications Platform Division and the Director of

Engineering of Network Building Block Division in

Intel's Communications Infrastructure Group. PK has

been with Intel since the acquisition of Dialogic in

1999. He joined Dialogic in 1996 and held various

engineering positions, including the VP of

Engineering. Prior to Dialogic, PK was at Hughes where

he led the development of satellite and cellular

communication products. PK holds 15 patents and is the

author of numerous papers for journals and conference

proceedings. PK holds a PhD in Electrical Engineering

from University of Rhode Island and a MBA from the

Wharton Business School at the University of

Pennsylvania.

Marco Platzner

U. Paderborn

ReconOS: Extending OS Services

over FPGAs

(slides)

ReconOS is an operating system for reconfigurable

computers that offers a unified multi-threaded

programming model and operating system services for

threads executing in software and threads mapped to

reconfigurable hardware. The operating system

interface allows hardware threads to interact with

software threads using well-known mechanisms such as

semaphores, mutexes, condition variables, and message

queues. By semantically integrating hardware

accelerators into a standard operating system

environment, ReconOS supports a structured application

development process and rapid design space

exploration. In this talk, I will present ReconOS and

report on our experience with using operating system

abstractions in embedded and high-performance

reconfigurable computing systems.

Marco Platzner

Professor for Computer Engineering at the University

of Paderborn. Previously, he held research positions

at the Computer Engineering and Networks Lab at ETH

Zurich, Switzerland, the Computer Systems Lab at

Stanford University, USA, the GMD - Research Center

for Information Technology (now Fraunhofer IAIS) in

Sankt Augustin, Germany, and the Graz University of

Technology, Austria. His research interests include

reconfigurable computing, hardware-software codesign,

and parallel architectures. Marco Platzner is member

of the board of the Paderborn Center for Parallel

Computing and the board of the Paderborn Institute of

Advanced Studies in Computer Science and

Engineering. Previously, he served on the board of the

Advanced System Engineering Center of the University

of Paderborn and was Head of the Computer Science

Department at the University of Paderborn.

Michaela Blott

Xilinx Labs

Programming and Benchmarking

FPGAs with Software-Centric Design

Entries

(slides)

End of Dennard scaling and increasing performance to

cost ratios on multicore architectures have recently

stimulated increased interest in FPGAs. Driven by this

need, new software-centric design environments are

emerging that can tremendously boost the productivity

of designers and open up FPGA acceleration to the

masses of software engineers. During this talk, we

will present latest advances in software-centric

design environments and elaborate on our ongoing

efforts within the Xilinx research organization to

benchmark and characterize a wide spectrum of

applications with FPGAs, GPUs, Xeons and Xeon Phis.

Michaela Blott

graduated from the University of Kaiserslautern in

Germany. She worked in both research institutions (ETH

and Bell Labs) as well as development organizations

and was deeply involved in large scale international

collaborations such as NetFPGA-10G. Today, she works

as a principal engineer at the Xilinx labs in Dublin

heading a team of international researchers,

investigating reconfigurable computing for data

centers and other new application domains. Her

expertise spreads data centers, high-speed networking,

emerging memory technologies and distributed computing

systems, with an emphasis on building complete

implementations.

Paul Chow

U. Toronto

Did I Just Do That on a Bunch

of FPGAs?

(slides)

Even with high-level synthesis that enables acceptable

hardware to be generated from languages such as C,

C++, and OpenCL, there is still much that is needed to

make FPGAs accessible to the software programmer. The

approach taken at the University of Toronto is to

adapt existing software programming models and

application frameworks so that they can incorporate

FPGAs in a way that is transparent to the application

developer. Our particular focus is on environments

where applications can be scaled to thousands of

FPGAs. This talk will highlight our work on building

infrastructure to support programming models and

applications that can work on top of large-scale

heterogeneous systems. These systems are supported by

virtualization and resource management provided by

OpenStack and OpenFlow. To make this into a strong

ecosystem will require the development of open

standards for software APIs and hardware abstraction

layers for FPGA-based processors.

Paul Chow is a

Professor in the Department of Electrical and Computer

Engineering at the University of Toronto where he

holds the Dusan and Anne Miklas Chair in Engineering

Design. Prior to joining UofT in 1988 he was at the

Computer Systems Laboratory at Stanford University,

Stanford, CA, as a Research Associate, where he was a

major contributor to an early RISC microprocessor

design called MIPS-X, one of the first microprocessors

with an on-chip instruction cache and the root of many

concepts used in processors today. His research

interests include high performance computer

architectures, reconfigurable computing, embedded and

application-specific processors, and

field-programmable gate array architectures and

applications. Paul was the Program Chair for the 2008

ACM/SIGDA International Symposium on

Field-Programmable Gate Arrays (FPGA 2008), the

premier conference for FPGAs and General Chair for

FPGA 2009. In 2011, he was the Program Chair for the

IEEE Symposium on Field-Programmable Custom Computing

Machines (FCCM 2011), the main conference for the

reconfigurable computing area. He was the FCCM 2012

General Chair. In addition, Paul is on the technical

program committee for the four main FPGA conferences:

FPGA, FCCM, FPL, FPT.

Organizers

Paolo Ienne (École Polytechnique Fédérale de Lausanne, CH)

David Andrews (University of Arkansas, US)

Walid Najjar (University of California Riverside, US)

Registration

Please use the FPL'15 main site to register and participate.